Friday, February 27, 2026 · 4:00–5:30 pm · Northwest Building, Room B103

This session focuses on using AI for interactive data analysis with Python notebooks — the kind of exploratory, iterative work that researchers do every day: loading data, writing analysis code, making plots, and debugging along the way.

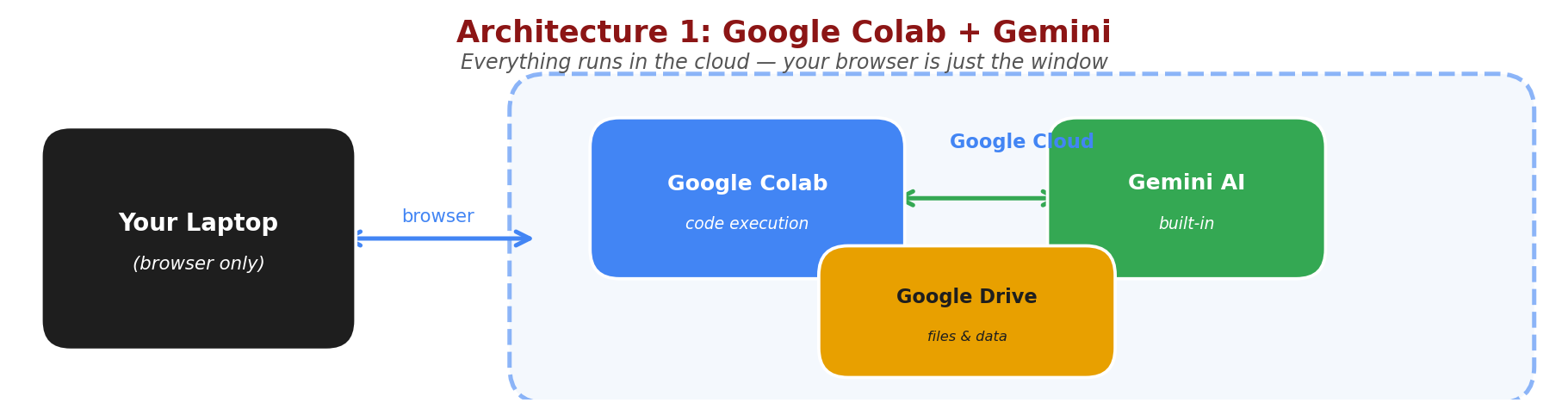

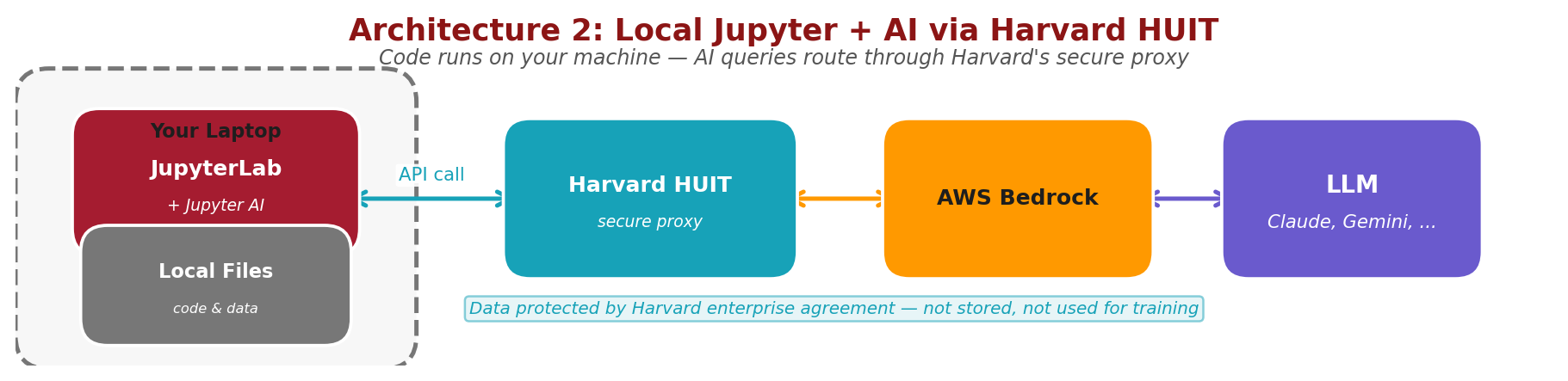

This session covers two different ways to use AI while writing Python code. Understanding the architecture helps you choose the right tool for the job.

In Exercise 1, your browser connects to Google Colab, which runs your code on Google's servers. Gemini AI is built in — everything happens in the cloud. Your laptop is just the display. Colab with Gemini is free through your Harvard Google account.

In Exercise 2, JupyterLab runs on your own machine, so your code and data stay local. When you ask the AI for help, the query goes through Harvard's secure HUIT proxy to an LLM (Claude, Gemini, or others) via AWS Bedrock, and the response comes back the same way. The same HUIT API key works for all models available through Bedrock. Your data is protected by Harvard's enterprise agreement. API calls through HUIT are not free — they are billed to your PI's HUIT account, so coordinate with your advisor.

Before the session, please ensure you have:

Follow these steps to get the demonstration notebooks ready:

How to download: Control-click (Mac) or right-click (Windows) each button and select "Download Linked File" or "Save Link As..."

Notebook 1: AI in Colab Notebook 2: File I/O with Google Drive Notebook 3: Data Browsing sdss_photometry.csv

sdss_photometry.csv to the Colab Notebooks folder in your Google Drive (same folder as the notebooks)This session covers two ways to integrate AI with Python programming:

Google Colab has built-in Gemini integration, allowing you to get AI assistance while writing code. Three notebooks walk you through progressively:

!pip installWhile Colab is convenient, many researchers prefer running Jupyter notebooks locally on their laptops for better control, offline access, and integration with local files. You can set up an AI chat assistant (Jupyternaut) directly inside JupyterLab, powered by Claude through Harvard's HUIT Bedrock proxy.

Mac Setup Guide

Windows Setup Guide

Step-by-step instructions for configuring Jupyter AI with Harvard's API endpoint

The setup guides (Mac | Windows) cover installation, environment configuration, Jupyternaut settings, available models, and troubleshooting.

Try it out: Once Jupyternaut is configured and responding, copy and paste this prompt into the Jupyternaut chat:

Can you make me a notebook that simulates a damped harmonic oscillator, in a new notebook. I want dynamic plots and adjustable parameters with slider controls.Review the code Jupyternaut generates. Does it run? Do the sliders work? This is a good test of whether the AI can produce a complete, functional notebook from a single natural-language request.

Next prompt: Now try this one, using the same data file from Exercise 1:

Now make another notebook that loads in and does some sensible analysis on sdss_photometry.csvHow does the AI decide what "sensible analysis" means for an astronomy dataset it hasn't seen before? Does it figure out what the columns are? What plots and statistics does it choose?

Advantages of local notebooks with AI:

Using Harvard's API endpoint charges costs to your PI's HUIT billing account. Make sure you set monthly spending limits when registering your API key. Monitor usage regularly and coordinate with your advisor about appropriate resource usage.

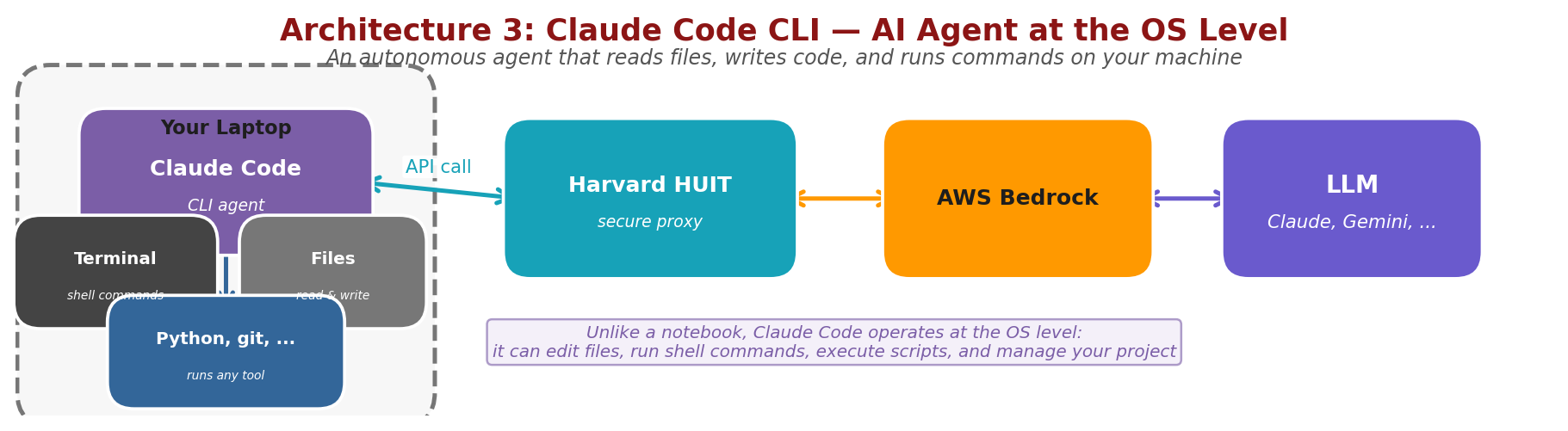

Next week, we'll explore Claude Code, a command-line AI agent that operates at the operating system level — not inside a notebook. It can read and write files anywhere on your machine, run shell commands, execute Python scripts, use git, and manage entire projects autonomously. Like the local Jupyter setup, it routes AI queries through Harvard's secure HUIT proxy.

Claude Code represents a more autonomous approach to AI-assisted programming — you describe what you want to accomplish, and it plans and executes the entire workflow.

No setup required before Session 3 — we'll walk through installation together during that session.

Please take a moment to share your feedback — it helps us improve future sessions.