This guide walks through configuring Jupyter AI (the JupyterLab AI chat assistant) to route queries through Harvard's HUIT Bedrock proxy. This gives you an interactive AI assistant directly inside JupyterLab on your local machine.

After the workshop, see Setting Up Anthropic API Access for Harvard Users for instructions on obtaining your own key.

Open Terminal and create a directory for this work:

mkdir -p ~/Desktop/GAI/exercises

cd ~/Desktop/GAI/exercisesYou will run all subsequent commands from this directory.

Ensure you are running Python 3.13 (or at least 3.10+). The system Python 3.9 from Xcode Command Line Tools is too old and causes package conflicts.

python3 --version

# Expected: Python 3.13.x

which python3

# Expected: /usr/local/bin/python3 (not /usr/bin/python3)If you need to install Python 3.13, download it from python.org.

Jupyter AI v3 is beta software that can pull in dependency versions incompatible with your existing Python packages. A virtual environment keeps everything isolated so nothing else on your system is affected.

python3 -m venv jupyter-ai-envActivate it:

source jupyter-ai-env/bin/activate

Your terminal prompt should now show (jupyter-ai-env) at the beginning,

confirming the virtual environment is active.

cd ~/Desktop/GAI/exercises

source jupyter-ai-env/bin/activateWith the virtual environment active, install JupyterLab:

pip install notebook jupyterlabVerify the installation and launch JupyterLab to confirm it works:

jupyter labClose it (Ctrl+C in the terminal) once you've confirmed it launches, then continue to the next step.

The HUIT proxy speaks the AWS Bedrock Converse API format. Jupyter AI v3 uses LiteLLM as its backend, which has native support for Bedrock endpoints with Bearer token authentication. This is why v3 (beta) is required instead of the stable v2 release.

pip install "jupyter-ai==3.0.0b9" boto3Verify:

pip show jupyter-ai

# Expected: Version: 3.0.0b9We'll create a small shell script that sets the required environment variables. This keeps everything in your working directory rather than modifying your system-wide shell configuration.

Open the file in nano:

nano set_env.shPaste the following contents (replace your-huit-api-key-here with your actual API key):

#!/bin/zsh

# ---- HUIT Bedrock Proxy Configuration ----

# Source this script before launching JupyterLab:

# source ./set_env.sh

# API key for the HUIT Bedrock proxy

export ANTHROPIC_API_KEY="your-huit-api-key-here"

# Base URL for the HUIT Bedrock proxy

export ANTHROPIC_BEDROCK_BASE_URL=https://apis.huit.harvard.edu/ais-bedrock-llm/v2

# Required for Jupyter AI / LiteLLM

# LiteLLM uses this for Bearer token auth to Bedrock endpoints

# (use the same value as ANTHROPIC_API_KEY)

export AWS_BEARER_TOKEN_BEDROCK="$ANTHROPIC_API_KEY"Save and exit nano (Ctrl+O, Enter, Ctrl+X). Then source the script:

source ./set_env.shVerify the key variables are set:

echo $AWS_BEARER_TOKEN_BEDROCK

echo $ANTHROPIC_API_KEY

# Both should print your API keyAWS_BEARER_TOKEN_BEDROCK? LiteLLM's Bedrock handler checks

this variable to decide whether to use Bearer token authentication (your HUIT API key) or

standard AWS SigV4 signing (which requires IAM credentials you don't have). Without it,

you'll get "Unable to locate credentials."

From your working directory, activate the virtual environment, source the environment script, and launch:

cd ~/Desktop/GAI/exercises

source jupyter-ai-env/bin/activate

source ./set_env.sh

jupyter labIn the JupyterLab interface, open the Jupyternaut (AI assistant) settings panel.

Open the Jupyternaut settings from the menu bar: Settings → Jupyternaut Settings.

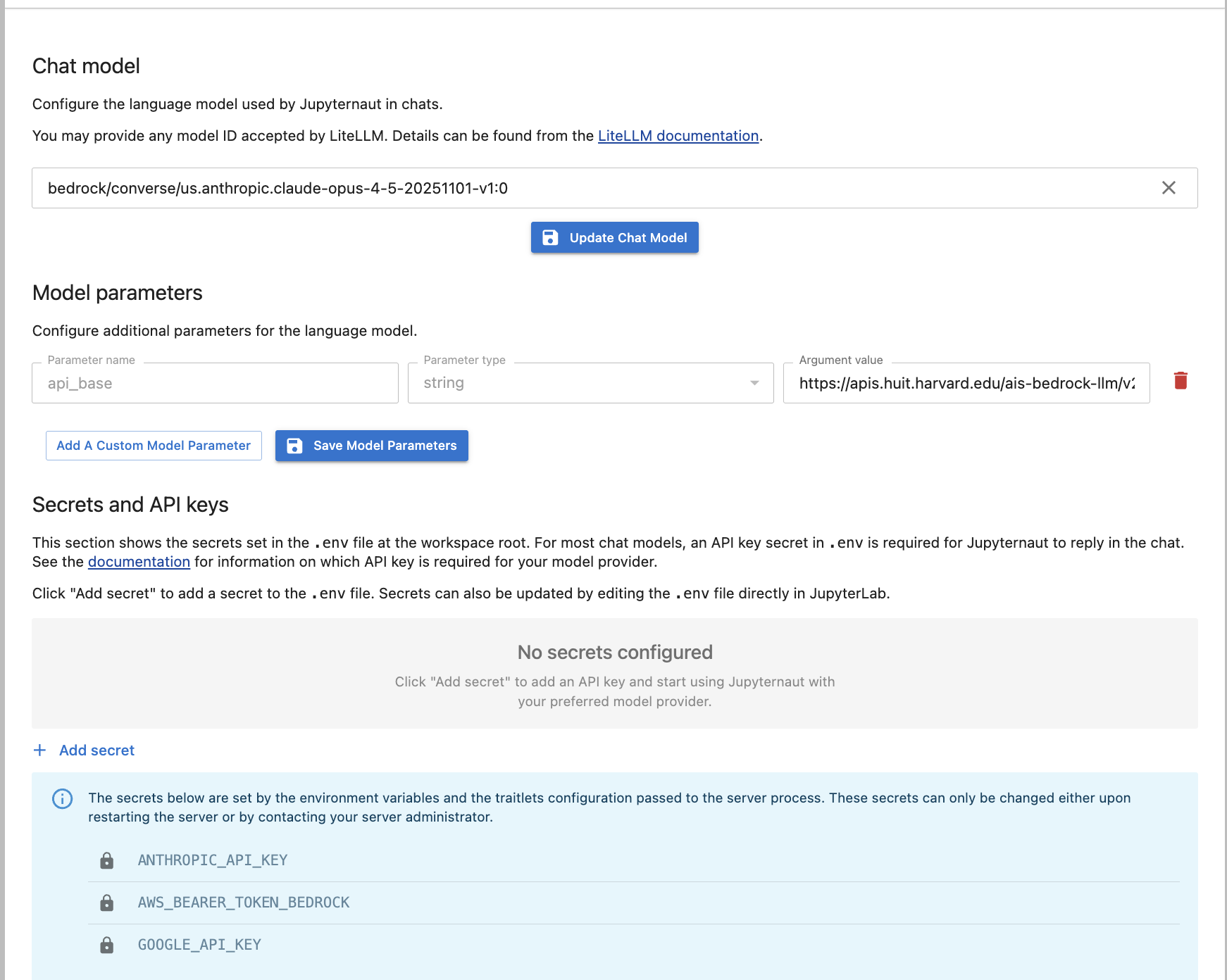

Your settings should look like this when properly configured:

In the Chat model ID field, enter:

bedrock/converse/us.anthropic.claude-opus-4-5-20251101-v1:0bedrock/converse/ prefix tells LiteLLM to use

the Bedrock Converse API handler, which correctly constructs the endpoint URL and supports

Bearer token auth. Do not use bedrock/converse_like/ — it has

URL construction issues with custom endpoints.

In the Model parameters section, add:

| Parameter Name | Type | Value |

|---|---|---|

api_base |

string | https://apis.huit.harvard.edu/ais-bedrock-llm/v2 |

In the Secrets and API keys section, confirm that

AWS_BEARER_TOKEN_BEDROCK appears as a static (read-only) secret

imported from your environment. If it doesn't appear, add it using the "Add secret" button.

Leave the Embedding model blank. It is only needed for the /learn

document indexing feature and is not required for chat.

Click Update chat model, then send a test message in the Jupyternaut chat:

Hello, can you tell me what model you are?Change the model ID in Jupyternaut settings to use different Claude models:

| Model | Chat Model ID |

|---|---|

| Claude Opus 4.5 | bedrock/converse/us.anthropic.claude-opus-4-5-20251101-v1:0 |

| Claude Opus 4.6 | bedrock/converse/us.anthropic.claude-opus-4-6-v1:0 |

| Claude Sonnet 4 | bedrock/converse/us.anthropic.claude-sonnet-4-20250514-v1:0 |

| Claude Haiku 3.5 | bedrock/converse/us.anthropic.claude-3-5-haiku-20241022-v1:0 |

LiteLLM is trying AWS SigV4 auth instead of Bearer token auth.

Ensure AWS_BEARER_TOKEN_BEDROCK is set. Run

source ./set_env.sh before launching JupyterLab.

Verify that AWS_BEARER_TOKEN_BEDROCK is set to the correct HUIT API key

(same value as ANTHROPIC_API_KEY).

This should not occur with bedrock/converse/ (which supports tools).

If it does, add to set_env.sh and re-source it:

export LITELLM_DROP_PARAMS=trueCheck the terminal where you launched jupyter lab for error tracebacks. Common causes:

bedrock/converse/)api_base model parameter in Jupyternaut settings

You may be using the wrong API format. The HUIT proxy uses Bedrock Converse API, not the

native Anthropic Messages API. Do not use the anthropic-chat provider.

| Package | Version | Purpose |

|---|---|---|

| jupyter-ai | 3.0.0b9 | JupyterLab AI extension (LiteLLM backend) |

| boto3 | (latest) | AWS SDK, required by LiteLLM for Bedrock |

| notebook / jupyterlab | (latest) | Jupyter Notebook server and JupyterLab IDE |

| Variable | Used By | Purpose |

|---|---|---|

ANTHROPIC_API_KEY |

HUIT Bedrock proxy | Your HUIT API key (authenticates all Bedrock requests) |

ANTHROPIC_BEDROCK_BASE_URL |

HUIT Bedrock proxy | Harvard's secure proxy URL |

AWS_BEARER_TOKEN_BEDROCK |

Jupyter AI / LiteLLM | HUIT API key for LiteLLM Bearer auth (same value as ANTHROPIC_API_KEY) |